In a quiet laboratory in Zurich, a robotic hand gently picks up a raw egg without cracking it, then seamlessly transitions to crushing a soda can with calculated force. This demonstration represents more than just mechanical precision—it embodies the quiet revolution happening at the intersection of artificial intelligence and robotics, where machines are learning to move and interact with the nuanced grace of biological organisms.

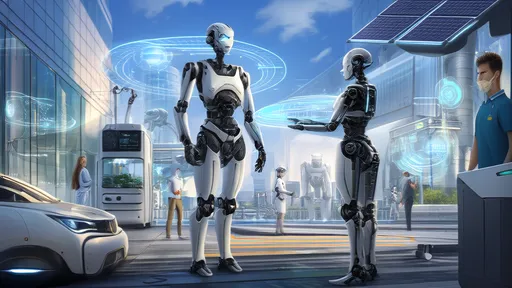

The journey toward truly biomimetic robotics has been decades in the making, but recent breakthroughs in AI are accelerating progress at an unprecedented rate. What was once the domain of science fiction—robots that move with animal-like fluidity, adapt to unpredictable environments, and interact with humans in intuitive ways—is rapidly becoming engineering reality. The implications span from manufacturing and healthcare to disaster response and daily companionship.

Learning Nature's Blueprint

Traditional robotics operated on precise programming—every movement planned, every variable accounted for. Today's AI-driven approach represents a fundamental shift. Instead of programming every possible scenario, engineers are creating systems that learn movement through observation and practice, much like a child learns to walk. Deep reinforcement learning, in particular, has emerged as a powerful tool for teaching robots complex motor skills.

At the University of California, Berkeley, researchers have developed algorithms that allow robots to learn bipedal locomotion through trial and error in simulated environments. The AI experiments with thousands of possible movements, receiving feedback on stability and efficiency, gradually refining its approach until it discovers the most effective gait. The resulting movement isn't just functional—it often bears striking resemblance to how animals and humans actually move, with subtle weight shifts and balancing mechanisms that engineers might never have programmed manually.

This learning-based approach is proving particularly valuable for navigating complex terrains. Boston Dynamics, long known for its impressive robotic demonstrations, has increasingly incorporated machine learning to help its robots recover from slips and falls. Where earlier models might have collapsed when encountering unexpected obstacles, newer versions can dynamically adjust their balance and footing, sometimes in ways that look almost instinctual.

The Sensory Revolution

Advanced movement represents only half the equation. True biomimetic interaction requires sophisticated sensing and interpretation capabilities. Here, too, AI is driving remarkable advances. Computer vision systems powered by convolutional neural networks can now parse complex visual scenes in real time, identifying objects, assessing their properties, and predicting how they might behave when manipulated.

More impressively, these systems are learning to combine multiple sensory inputs—vision, touch, and even audio—to build rich understanding of their environment. Researchers at MIT have developed a robotic system that uses visual sensing to approximate an object's weight before lifting it, then fine-tunes its grip strength based on tactile feedback during the actual lift. The system can distinguish between materials as delicate as tissue paper and as sturdy as wood, adjusting its manipulation strategy accordingly.

Perhaps the most biologically inspired development comes from the field of neuromorphic computing, where engineers are building systems that mimic the brain's neural architecture. These event-based vision sensors, unlike conventional cameras that capture full frames at fixed intervals, only register changes in the visual field—much like the human eye. This approach dramatically reduces data processing requirements while improving responsiveness to rapid movements, enabling robots to react to visual stimuli with near-instantaneous speed.

Social Intelligence and Embodied AI

The frontier of biomimetic robotics extends beyond physical movement to social interaction. For robots to truly integrate into human environments, they must understand and respond to social cues—facial expressions, gestures, tone of voice, and even cultural context. This requires what researchers call "theory of mind"—the ability to infer what others are thinking and feeling.

Companies like SoftBank Robotics with their Pepper robot and Hanson Robotics with the famous Sophia have pioneered this space, but recent advances are taking social robotics to new levels of sophistication. Through natural language processing and affective computing, modern social robots can engage in contextually appropriate conversations, recognize individual humans, and remember personal preferences across multiple interactions.

At Stanford University, researchers are developing systems that can detect subtle micro-expressions that even humans might miss, potentially allowing robots to serve as sensitive companions for elderly individuals or those with social communication challenges. These systems learn from thousands of hours of human interaction videos, identifying patterns that correlate specific expressions with underlying emotional states.

Overcoming the Simulation-to-Reality Gap

One of the most significant challenges in AI-driven robotics has been what researchers call the "sim-to-real" gap—the difference between how algorithms perform in perfect simulated environments versus messy real-world conditions. Early attempts often saw beautifully simulated movements fall apart when deployed on physical hardware, where friction, weight distribution, and material properties introduced complexities absent from digital models.

The solution has emerged through more sophisticated physics engines and domain randomization—training AI in simulations with wildly varying conditions so it learns robust strategies rather than optimized solutions for specific parameters. This approach has proven remarkably effective, with robots trained entirely in simulation successfully performing complex tasks in physical environments they've never encountered.

NVIDIA's Isaac Lab represents the state of the art in this domain, providing a development platform where thousands of virtual robots can train simultaneously across diverse simulated environments. The system can accelerate training that would take years in the real world into just days or hours, while producing behaviors that transfer effectively to physical hardware.

Ethical and Societal Considerations

As robots become increasingly biomimetic in both movement and interaction, they raise important questions about our relationship with technology. The very realism that makes these robots effective—their fluid movements, expressive capabilities, and responsive behaviors—also creates the potential for emotional attachment and anthropomorphism that may warrant careful consideration.

Researchers are beginning to establish guidelines for what some call "responsible biomimicry"—design principles that maintain appropriate boundaries between human and machine. These include transparency about a robot's capabilities and limitations, avoidance of deceptive realism, and careful consideration of applications where human-like appearance might create unrealistic expectations.

Meanwhile, the economic implications of increasingly capable robots continue to spark debate. While biomimetic robots promise to take over dangerous, repetitive, or physically demanding jobs, their advancing capabilities also suggest potential displacement in more complex roles. The very adaptability that makes them valuable could eventually extend their reach far beyond the structured environments of traditional industrial automation.

The Road Ahead

Looking forward, the convergence of several emerging technologies promises to accelerate progress even further. The integration of large language models with robotic control systems, for instance, could allow for more natural instruction and collaboration. Instead of programming specific movements, humans might simply describe tasks in everyday language, with the robot interpreting the instructions and determining the appropriate physical actions.

Advances in materials science also play a crucial role. Artificial muscles based on piezoelectric polymers or shape-memory alloys are becoming increasingly sophisticated, offering more natural movement profiles than traditional motors and hydraulics. Similarly, developments in sensitive electronic skin technologies are providing robots with tactile capabilities approaching the sensitivity of human touch.

Perhaps most exciting is the growing integration across these domains. Robots with advanced motor skills, rich sensory perception, social intelligence, and natural language capabilities represent more than the sum of their parts—they point toward a future where human-robot collaboration becomes seamless and intuitive.

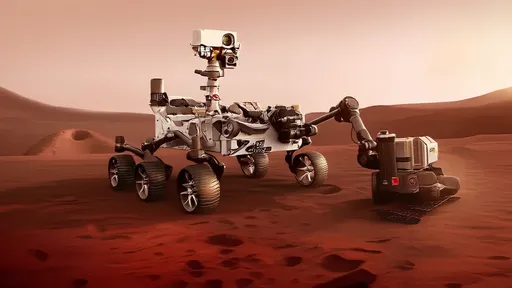

As these technologies mature, we're likely to see biomimetic robots move beyond laboratory demonstrations into everyday applications. From healthcare assistants that help patients with mobility challenges to rescue robots that navigate disaster sites with animal-like agility, the practical implementations could transform numerous aspects of our lives.

The ultimate goal isn't to create robots that perfectly mimic biological organisms in every detail, but rather to capture the principles that make biological movement and interaction so effective—adaptability, efficiency, and graceful recovery from unexpected challenges. In this pursuit, AI serves not just as a tool for control, but as a bridge between the mathematical certainty of engineering and the beautiful messiness of biology.

What began as an effort to make robots more capable is evolving into a deeper exploration of movement, intelligence, and interaction itself. The insights gained from creating biomimetic robots may eventually circle back to enhance our understanding of biological systems, creating a virtuous cycle of discovery across disciplines. As these fields continue to converge, the boundary between born and built may become increasingly blurred—not just in how robots move and interact, but in how we perceive and relate to them.

By /Oct 20, 2025

By /Oct 20, 2025

By /Oct 20, 2025

By /Oct 20, 2025

By /Oct 20, 2025

By /Oct 20, 2025

By /Oct 20, 2025

By /Oct 20, 2025

By /Oct 20, 2025

By /Oct 20, 2025

By /Oct 20, 2025

By /Oct 20, 2025

By /Oct 20, 2025

By /Oct 20, 2025

By /Oct 20, 2025

By /Oct 20, 2025

By /Oct 20, 2025

By /Oct 20, 2025